If Your AI "Hallucinates" a €10M Deduction, Who Signs the Penalty Check?

Thoughts on AI liability rules and building software for defensible tax reporting

I have been watching the rapid rollout of AI assistants across the tax/accounting/finance tech stack—from Brex Assist and Xero’s JAX to Intuit Assist. These tools are incredible at turning fragmented, manual processes into centralized, automated engines.

But amidst these rapid developments, I've been asking the same question: if your AI “hallucinates” a €10M tax deduction based on a misinterpretation of a cross-border VAT treaty, who signs the penalty check?

So, I decided to analyse the consequences focusing on two aspects:

What are the current guidances on using AI for tax reporting from Tax Authorities around the world? (note: of course, my research is not tax advice and is based on publicly available sources. Consult tax professionals for further guidance.)

How should product manager, engineers, and tax managers approach incorporating these guidances when integrating AI into critical systems?

Global Research Summary: AI Liability & Tax Compliance (2026)

To answer the first question, I dove into publicly available, country-specific guidances. Here are some observations from across the globe based on publicly available articles.

1. The UK & EU: The “Liability Hammer”

United Kingdom: The FRC’s March 2026 guidance is the global gold standard—audit partners and directors are 100% accountable for AI outputs. The precedent set in Gary Elden v HMRC (2026) proves that “unchecked AI use” is now legally categorized as professional incompetence. As the regulator says: “You can’t blame it on the box.”

European Union: As of August 2026, the EU AI Act is fully enforceable. Penalties for “high-risk” financial AI errors can hit €35M or 7% of global turnover.

France & Poland: France’s 2026 e-invoicing mandate and Poland’s KSeF system have removed the buffer. Taxpayers—not their software providers—remain solely liable for every data point transmitted.

2. APAC: Governance of “Agentic” AI

Singapore: Budget 2026 introduces a 400% tax deduction for AI expenditure, but with strings attached. Singapore has launched the world’s first Model Governance Framework for Agentic AI, emphasizing that “meaningful human accountability” must remain central to deployment.

Japan: The 2026 Tax Reform incentivizes AI R&D but tightens intra-group transaction documentation. The NTA is moving toward Tax Administration 2.0, using AI to automatically match NTA data to identify taxpayer errors.

New Zealand: Inland Revenue (IRD) has confirmed that “human in the loop” oversight is mandatory for all AI workflows.

Australia: The ATO now requires an AI Transparency Statement from all entities that use AI in tax-affecting decisions.

3. LATAM: Transparency & “Risk-Based” Compliance

Brazil: The new Taxpayer Protection Code (TPC) introduces the “habitual tax debtor” status. If your AI creates unjustified non-payment, you lose tax benefits.

Mexico: The 2026 Master Plan targets improper deductions identified by SAT’s AI. If your system cannot prove the “materiality” of a transaction, the deduction is denied.

Argentina: Law No. 11,089 mandates that any AI used for tax settlement must be subject to external, independent audits.

4. North America: The Enforcement Surge

USA (IRS): The IRS has expanded its AI use cases to 126, with a focus on large partnerships. They use AI to catch the “hallucinations” in your filings. Without contemporaneous support, your AI-driven deduction is a red flag.

Canada: The CRA 2026-27 Plan treats AI errors the same as manual ones.

Based on this research, here is a summary of where global authorities sit on the “AI Sentiment Spectrum”:

Guide to the Spectrum:

Zone 1: Innovation-First

Core Philosophy: AI is viewed as a national competitive advantage. Regulators prioritize adoption and “safe harbor” provisions for early movers.

Examples: In Singapore, Budget 2026 offers massive tax deductions (up to 400%) for AI implementation, provided companies follow the Model Governance Framework for Agentic AI.

The Trade-off: High transparency is expected, but the focus is on “Cooperative Compliance” rather than punitive measures.

Zone 2: Balanced Scrutiny

Core Philosophy: “Trust, but verify.” These authorities use AI aggressively for their own enforcement (e.g., the IRS’s 126 use cases) and expect taxpayers to maintain a high bar of documentation.

Examples: Australia (ATO) and the USA have effectively mandated “Contemporaneous Support.” If an AI picks a deduction, you must be able to surface the underlying data trail immediately.

The Trade-off: No special penalties for AI errors yet, but standard penalties are applied strictly with a lower tolerance for “clerical error” excuses.

Zone 3: Liability-First

Core Philosophy: “The AI black box is no defense”. Algorithmic mistakes are treated as human professional negligence.

Examples: The EU AI Act (enforced Aug 2026) imposes fines up to 7% of global turnover. In the UK, the FRC’s stance is that a hallucination is proof of a lack of professional skepticism.

The Trade-off: Speed is secondary to Auditability. In these regions, a “Black Box” AI system is essentially a ticking legal time bomb for a CFO.

While most Tax Authorities accept the adoption of AI, their tolerance for algorithmic error varies wildly from leniency to professional negligence. My prediction is that, depending on how fast AI for tax reporting matures, most authorities will become stricter on penalising errors attributed to AI.

So, what do we do to prepare for this seismic shift? In the upcoming sections, I am giving some thoughts on how to prepare.

1️⃣ The Tech Maturity Gap: Breaking the “Tech Stack Prison”

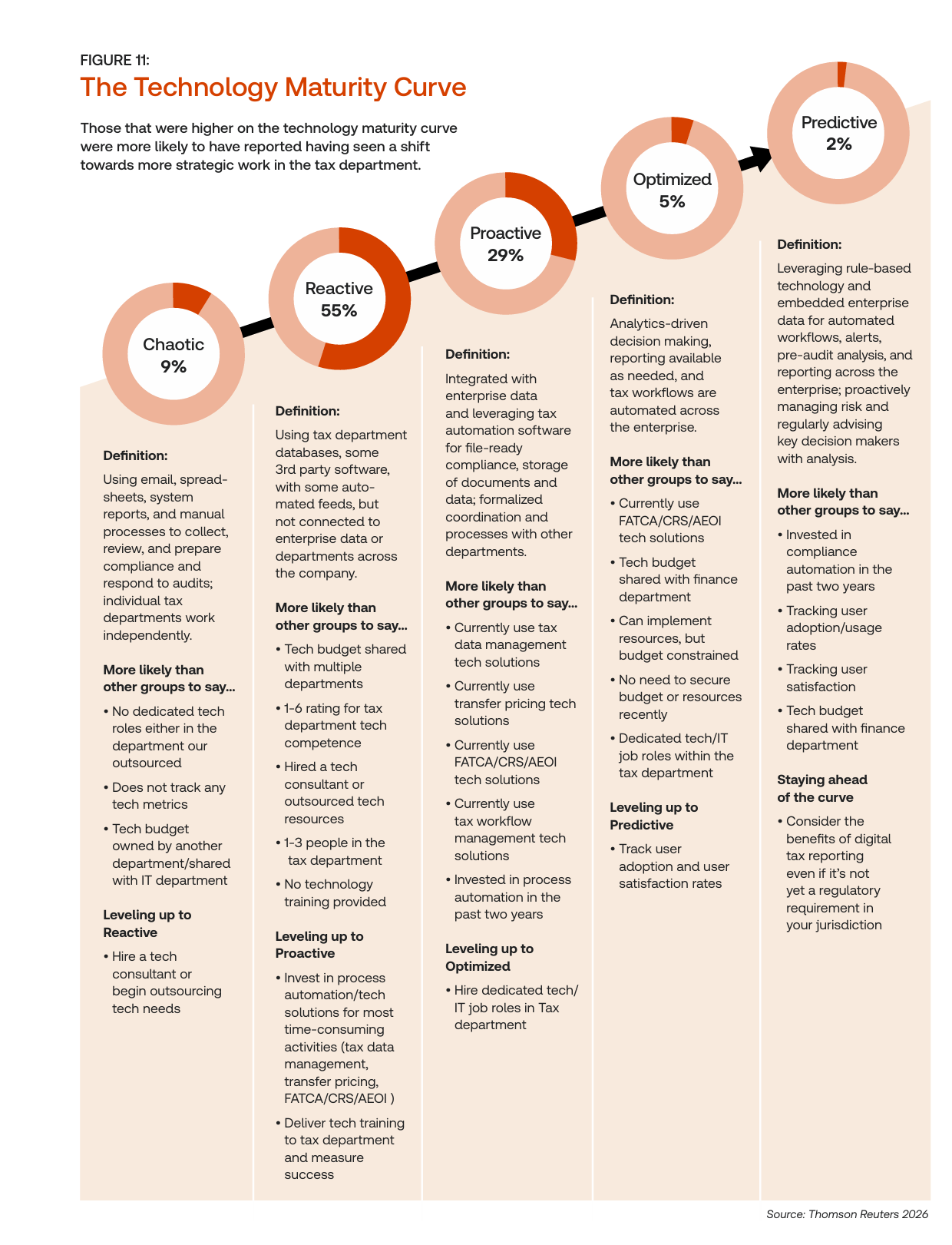

In my experience building at S&P 500 scale, the biggest risk isn’t the AI itself—it’s the Maturity Gap. According to the Thomson Reuters 2026 Corporate Tax Department Technology Report, the term describes the relative advancement of a company’s tech stack through the different stages of technological maturity, which refers to the typical steps that a corporate tax department travels through on its technological journey toward a more proactive approach to tax work:

According to the report, 55% of the surveyed companies are stuck in Phase 2: Reactive. This stage includes:

Using tax department databases, some 3rd party software, with some auto mated feeds, but not connected to enterprise data or departments across the company.

Tech budget shared with multiple departments

Hired a tech consultant or outsourced tech resources

No technology training provided

Needs investment in process automation/tech solutions for most time-consuming activities (tax data management, transfer pricing, FATCA/CRS/AEOI )

More robust metrics needed to measure succes

In essence, these companies have an outdated tech stack which makes any scalable AI usage impossible. To escape this “tech stack prison,” they must move up the Technological Maturity Curve to get to Optimized & Predictive (Stages 4-5). In those, the tax logic is a standalone platform capability. This is the only way to scale sustainably.

2️⃣ The Audit Trap: Defining “Reasonable Care” in a Neural Network

How do you prove “reasonable care” when your tax logic is buried in a neural network?

In a traditional audit, you show your work via spreadsheets or law citations. In an AI world, regulators are shifting the definition of “care” from what you filed to how you governed the system that filed it.

As the ACCA’s 2026 Topical Guidance notes: “Members should avoid overstating the accuracy of the data output... Undertaking appropriate due diligence on the output provided by an AI tool can help safeguard against the risks.”

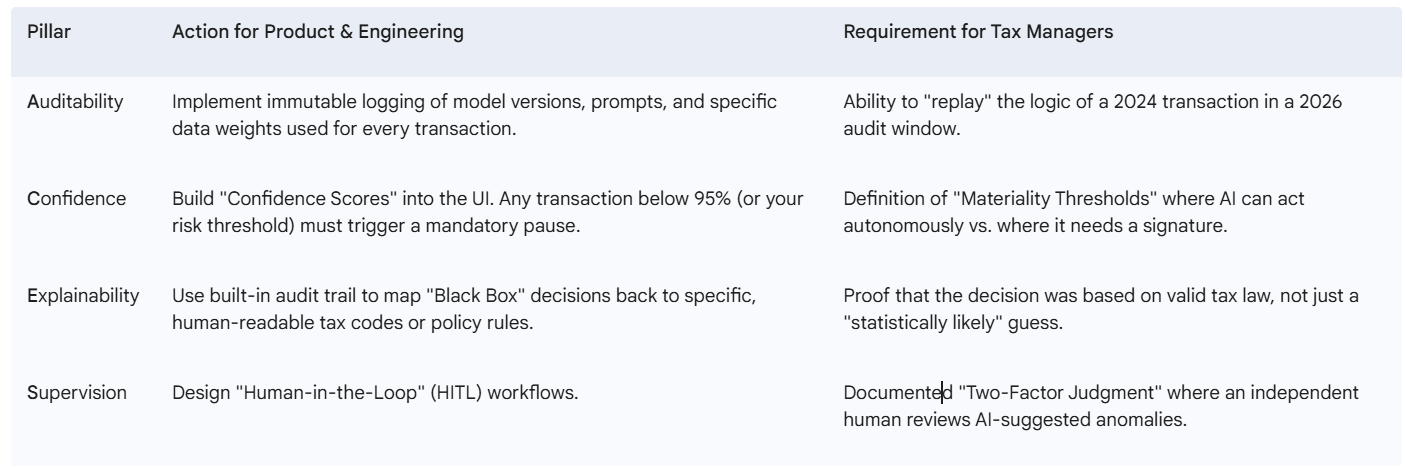

The “A.C.E.S.” Framework for Defensible AI

To get a step closer to complying with the updated reasonable care definition, I suggest the A.C.E.S. Framework—a four-pillar approach to ensuring any AI is audit-ready and legally defensible:

Through these pillars any company using AI for tax reporting can start framing its defensible tax reporting as the ultimate audit trap isn’t the AI’s error—it’s the inability to explain it. In a world of probabilistic logic, ‘reasonable care’ requires a deterministic framework such as A.C.E.S.

3️⃣ The Penalty Reality: Moving to “Rules as Code”

If you are sourcing a system today, move beyond the “AI” hype. Tax requirements shouldn’t be a one-off ticket; they need to be a reusable logic library.

As organizations like the OECD and OPSI have championed in their Rules as Code (RaC) frameworks, the goal is to transform legislation into machine-consumable code. To incorporate this at your enterprise, I suggest the you consider the following:

Rule Atomization: Decouple tax logic from your ERP. Store rules in a modular “Source of Truth” (using engines like OpenFisca or Drools).

API-First Consumption: Expose these rules via a Tax Service Layer. Any system—whether it’s a checkout page or a GenAI assistant—must call this central library for a deterministic check.

Governance Wrappers: Wrap AI probabilistic insights within human-defined, deterministic rules to ensure an immutable audit trail.

In case you source your tax reporting software from a 3rd party, make sure those are in place before signing a contract.

🎯 The Bottom Line: Strategic Mandates for Defensible Tax Reporting Using AI

The era of “black box” tax automation is officially over. To reach the next level of global digital transformation and mitigate the €35M risk, senior leadership must adopt the following three mandates:

I. Accountability: Human-Centric Liability

“You cannot blame it on the box.” Regulatory mandates in the UK (FRC) and the EU (AI Act) have made it clear: liability for AI hallucinations sits squarely with human partners and company directors. Neither the software vendor nor the AI model can hold the burden of proof. Organizations must move from passive consumption of AI outputs to active, documented oversight.

II. Architecture: Transitioning to the “Predictive” Stage

The “Tech Stack Prison” is one of the greatest barrier to AI-driven efficiency. Companies stuck in Stage 2 (Reactive) lack the data integration required to validate AI insights. To scale sustainably, the tax logic must be decoupled from the ERP and implemented as a standalone platformcapability. This shift allows for the validation of probabilistic AI guesses against hard-coded legal rules in real-time.

III. Defense: Re-Defining “Reasonable Care”

In a traditional audit, care is shown through spreadsheets. In an AI-native world, auditors will inspect your governance. Institutionalizing AI governance through a framework such as A.C.E.S. or another is the only way to satisfy the “Reasonable Care” requirements of modern tax authorities. Without a framework that allows you to “replay” the logic of a 2024 transaction in a 2026 audit window, your AI-driven deductions will be treated as red flags.

The Final Takeaway: AI represents the “Product Heart” of the modern finance stack, but it requires a “Compliance Brain” to function safely. To win in the current Global Sentiment Spectrum, you must build for transparency and auditability, not just for speed and cost-reduction.

References & Sources

OECD OPSI: Rules as Code: A Case Study in Public Sector Innovation (2024-2026)

Thomson Reuters Institute: 2026 Corporate Tax Department Technology Report (March 2026)

Financial Reporting Council (UK): Guidance on AI Accountability (March 2026)

Ross Martin Tax: Gary Elden v HMRC (2026) Case Summary (Jan 2026)

K&L Gates: Singapore’s New Model AI Governance Framework for Agentic AI (Feb 2026)

Alvarez & Marsal: Singapore Budget 2026 Spotlight (Feb 2026)

EY Japan: 2026 Japan Tax Reform Highlights (Feb 2026)

IMF/NTA Japan: Tax Administration 2.0 Digital Transformation (2026)

Inland Revenue NZ: OIA: Use of AI at IR (Jan 2026)

ATO Australia: AI Transparency Statement (2026)

BDO Global: Brazil Taxpayer Protection Code 2026 (March 2026)

Olshan Law: IRS AI Use in Tax Enforcement (March 2026)

Baker McKenzie: EU AI Act Compliance Radar (Accessed April 2026)

OECD: Due Diligence Guidance for Responsible AI (Feb 2026)

ACCA Global: Application of PCRT to AI Ethics (Jan 2026)